Differentially Private

Gaussian Processes

Presented by Mike Smith, University of Sheffield

michaeltsmith.org.uk

m.t.smith@sheffield.ac.uk

@mikethomassmith

Differential Privacy for Gaussian Processes

Differential Privacy for GPs

We have a dataset in which the inputs, $X$, are public. The outputs, $\mathbf{y}$, we want to keep private.

Data consists of the heights and weights of 287 women from a census of the !Kung

Vectors and Functions

Hall et al. (2013) make a function private by adding a scaled sample from its GP prior.

We show that (for many common kernels) the scale is

$d\;||K^{-1}||_\infty$

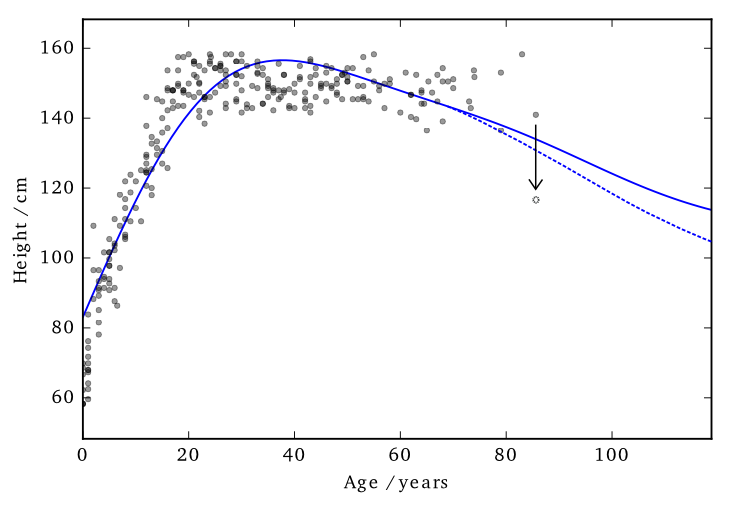

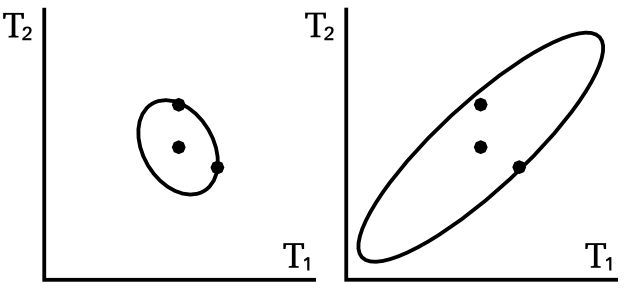

Applied to Gaussian Processes

This 'works' in that it allows DP predictions...but to avoid too much noise, the value of $\varepsilon$ is too large (here it is 100)

EQ kernel, $l = 25$ years, $\Delta=100$cm

Cloaking

So far we've made the whole posterior mean function private...

...what if we just concentrate on making particular predictions private?

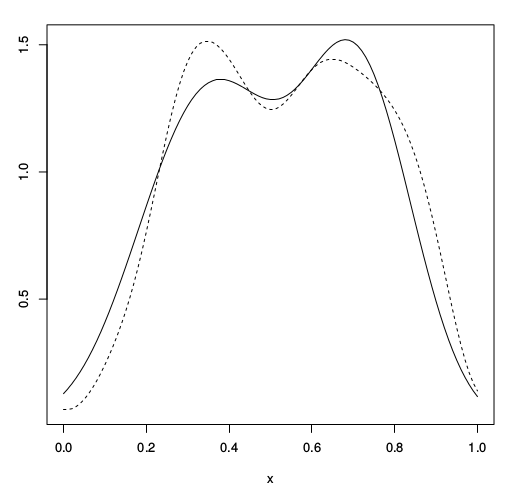

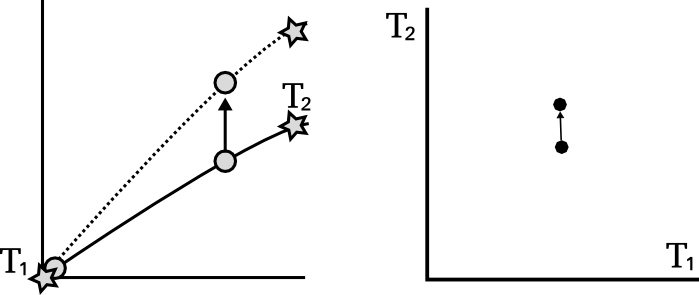

Effect of perturbation

Previously I mentioned that the noise is sampled from the GP's prior.

This is not necessarily the most 'efficient' covariance to use.

Effect of perturbation

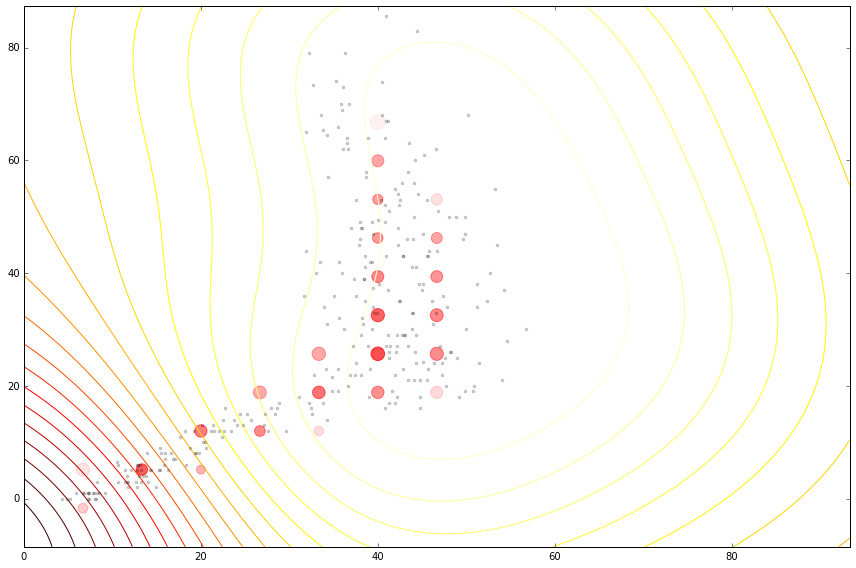

Cloaking

Left: Ideal covariance. Right: actual covariance

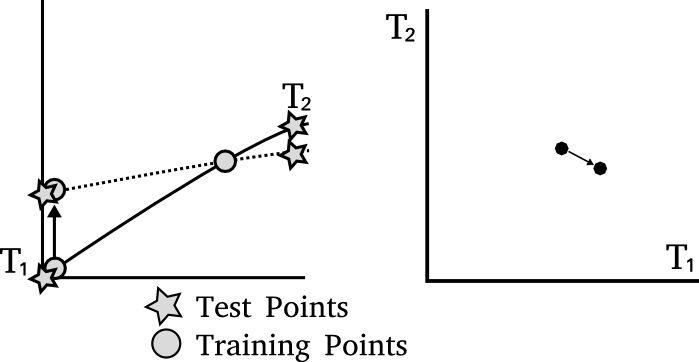

DP Vectors

Hall et al. (2013) also show how to add noise to a vector

$\frac{\text{c}(\delta)\Delta}{\varepsilon} \mathcal{N}_d(0,M)$

where

$\sup_{D \sim {D'}} ||M^{-1/2} (\mathbf{y}_* - \mathbf{y}_{*}')||_2 \leq \Delta$

We get to pick $M$

$M = \sum_i{\lambda_i \mathbf{c}_i \mathbf{c}_i^\top}$

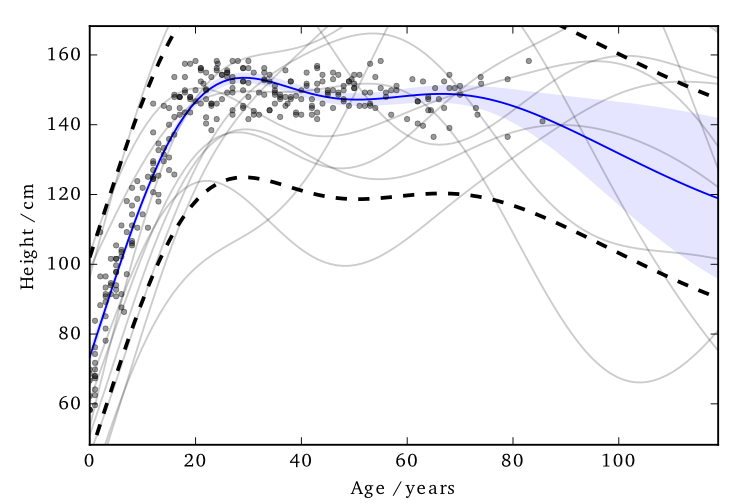

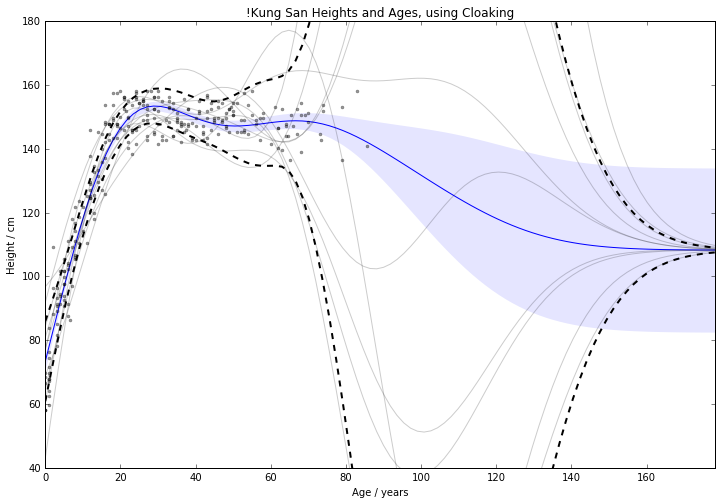

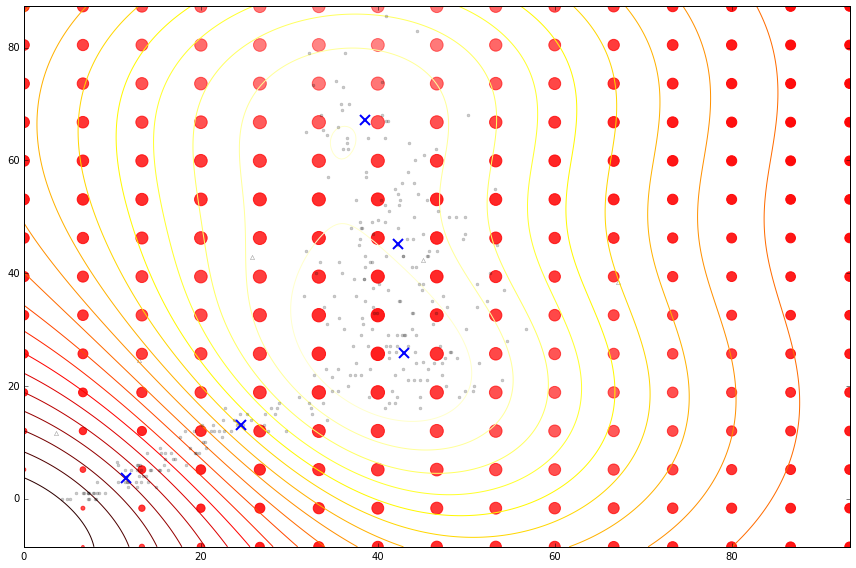

Cloaking: Results

The noise added by this method is now practical.

EQ kernel, $l = 25$ years, $\Delta=100$cm, $\varepsilon=1$

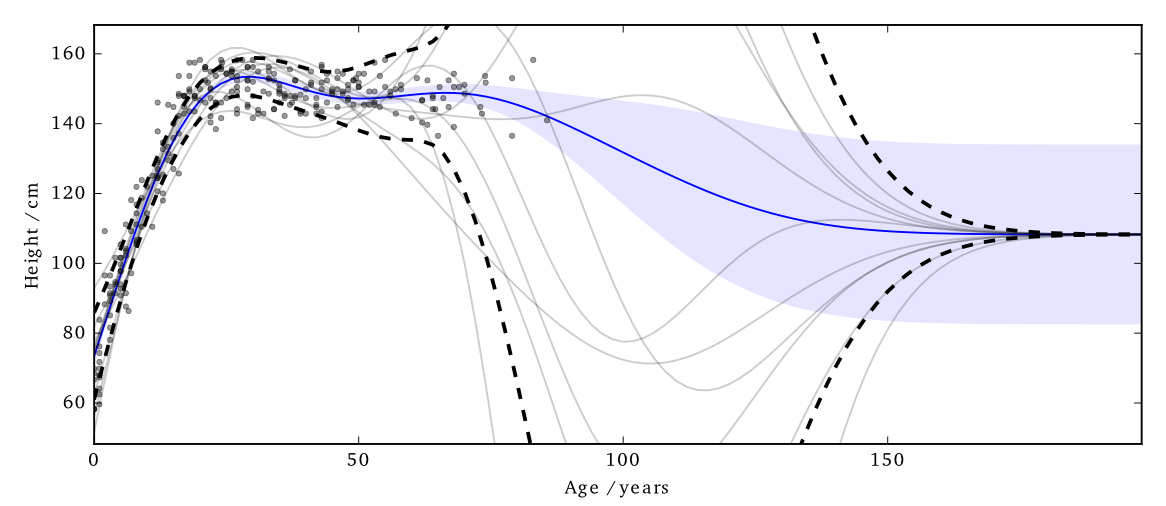

House prices around London

Citibike

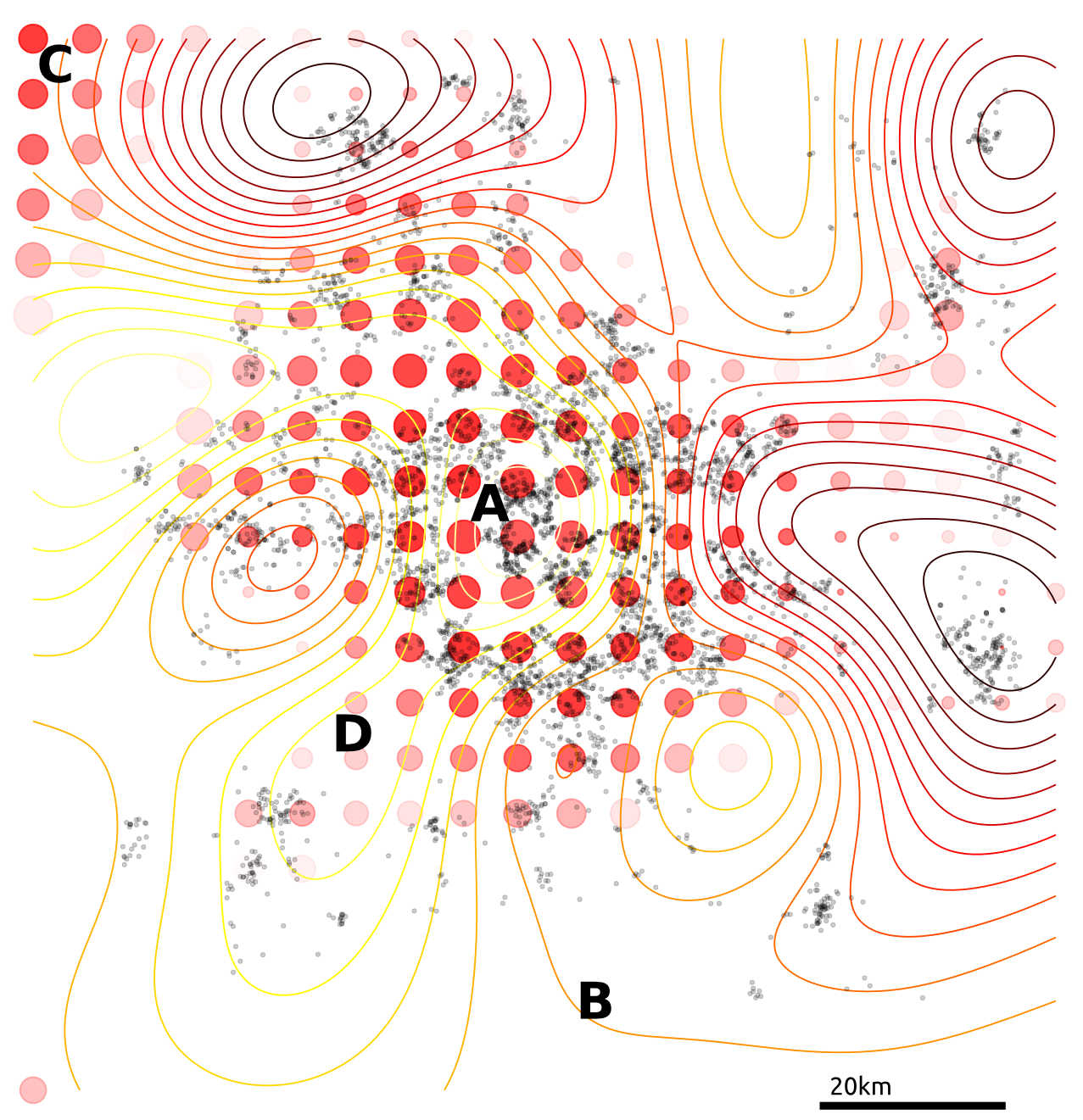

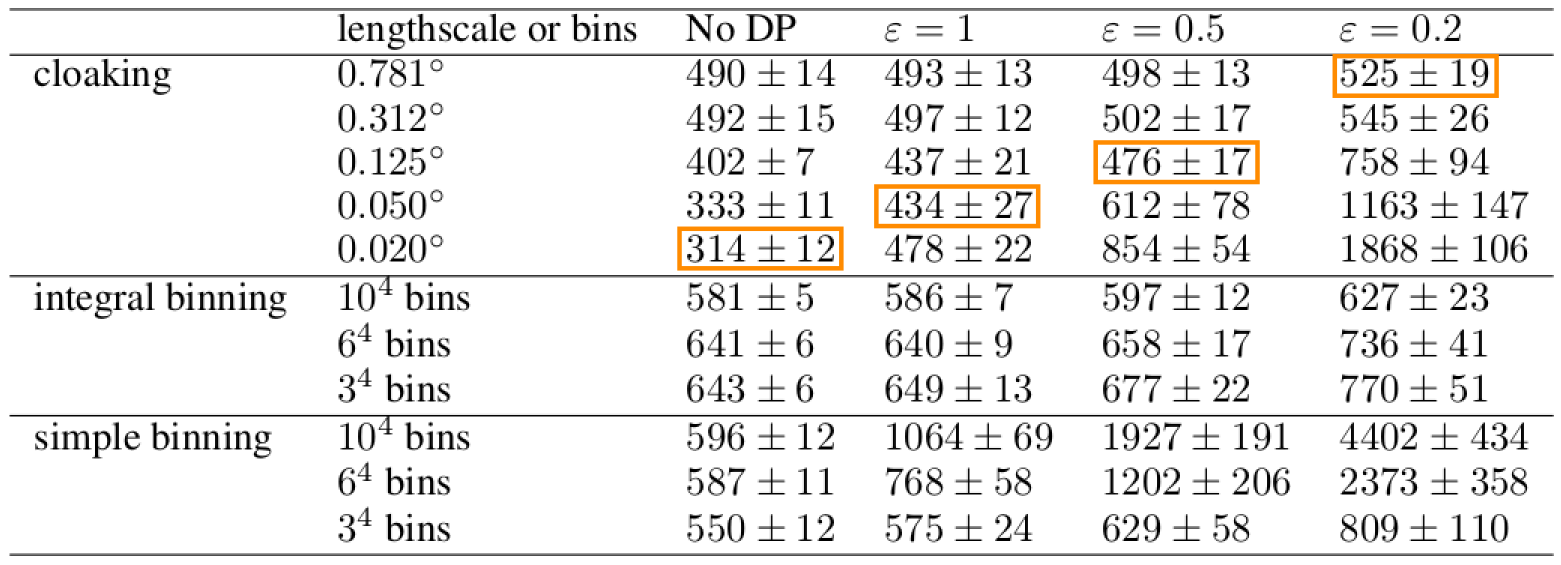

Tested on 4d citibike dataset (predicting journey durations from start/finish station locations).

The method appears to achieve lower noise than binning alternatives (for reasonable $\varepsilon$).

lengthscale in degrees, values above, journey duration (in seconds)

lengthscale in degrees, values above, journey duration (in seconds)

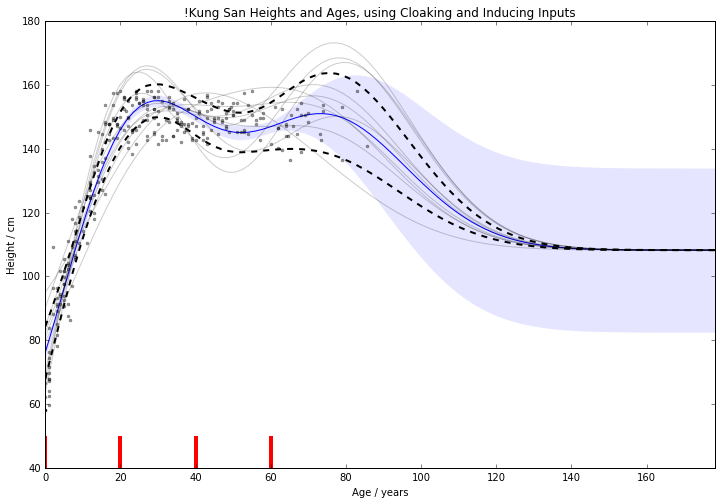

Cloaking and Inducing Inputs

Outliers poorly predicted.

Too much noise around data 'edges'.

Use inducing inputs to reduce the

sensitivity to these outliers.

Cloaking (no) Inducing Inputs

Cloaking and Inducing Inputs

Results

For 1d !Kung, RMSE improved

from $15.0 \pm 2.0 \text{cm}$ to $11.1 \pm 0.8 \text{cm}$

Use Age and Weight to predict Height

For 2d !Kung, RMSE improved

from $22.8 \pm 1.9 \text{cm}$ to $8.8 \pm 0.6 \text{cm}$

Note that the uncertainty across x-validation runs smaller.

2d version benefits from data's 1d manifold.

Cloaking (no) Inducing Inputs

Cloaking and Inducing Inputs

Summary We have developed an improved method for performing differentially private regression.

Future work Multiple outputs, GP classification, DP Optimising hyperparameters, Making the inputs private.

Thanks Funders: EPSRC; Colleagues: Mauricio, Neil, Max.

Recruiting Deep Probabilistic Models: 2 year postdoc (tinyurl.com/shefpostdoc)

The go-to book on differential privacy, by Dwork and Roth;

Dwork, Cynthia, and Aaron Roth. "The algorithmic foundations of differential privacy." Theoretical Computer Science 9.3-4 (2013): 211-407.

link

I found this paper allowed me to start applying DP to GP;

Hall, Rob, Alessandro Rinaldo, and Larry Wasserman. "Differential privacy for functions and functional data." The Journal of Machine Learning Research 14.1 (2013): 703-727.

link

Articles about the Massachusetts privacy debate

Barth-Jones, Daniel C. "The're-identification'of Governor William Weld's medical information: a critical re-examination of health data identification risks and privacy protections, then and now." Then and Now (June 4, 2012) (2012). link

Ohm, Paul. "Broken promises of privacy: Responding to the surprising failure of anonymization." UCLA Law Review 57 (2010): 1701. link

Narayanan, Arvind, and Edward W. Felten. "No silver bullet: De-identification still doesn’t work." White Paper (2014). link

Howell, N. Data from a partial census of the !kung san, dobe. 1967-1969. https://public.tableau. com/profile/john.marriott#!/vizhome/ kung-san/Attributes, 1967.

Images used: BostonGlobe: Mass Mutual, Weld. Harvard: Sweeney. Rich on flickr: Sheffield skyline.